2013-07-01 11:43:57 -0400

UPDATE Oct 10, 2013: We've made great progress, and we're opening the download links up below, the latest build of Convert to STRIP for Windows should be processing the SafeWallet XML files correctly now. Please give it a shot and let us know! -WG

UPDATE Oct 8, 2013: The latest versions of SafeWallet (3.0.x) are NOT compatible with our converter. We're working hard with a number of our customers and lots of sample data to resolve this as soon as we can, but it's turned out to be quite a bit more complex than anticipated. We will get it! But be advised that if you are planning to purchase STRIP and to use the converter specifically for SafeWallet data, it does not work right now. Please get in touch with us if you have any questions, and thanks for your patience! -W.Gray

One of the more popular password managers out there is SafeWallet. A few new customers have been checking out STRIP as an alternative after reading about our password manager and have asked us about how they might import their SafeWallet data directly into STRIP. On Friday we posted an updated version of our Convert to STRIP utility to add this new option for both Windows and OS X. Read on to see how it works.

Get STRIP Now »

Convert to STRIP for Windows »

Convert to STRIP for OS X »

Export your SafeWallet data

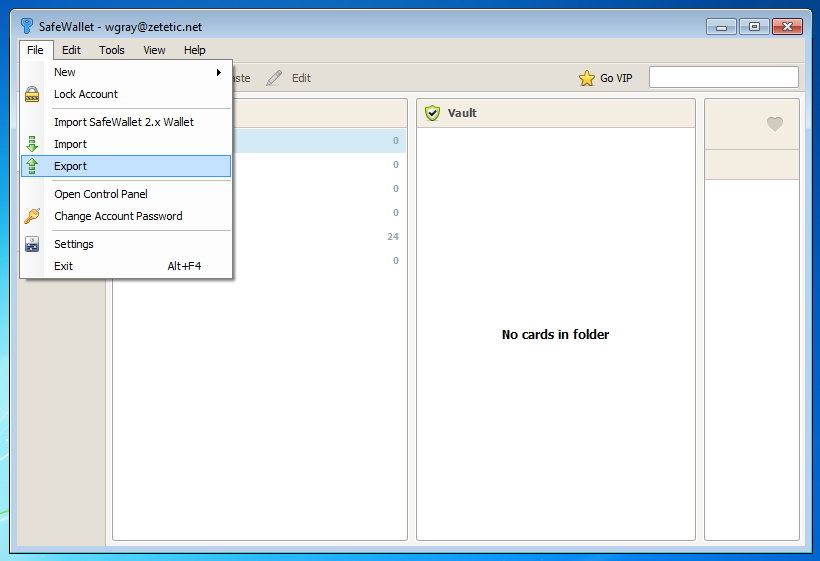

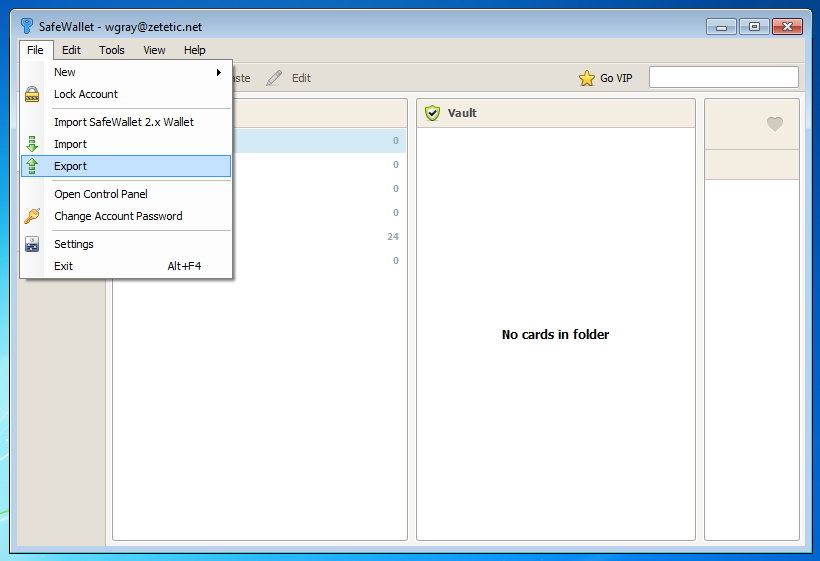

Launch SafeWallet, login to your wallet, and then select File -> Export, and a simple export wizard will appear allowing you to save a .XML file of your data anywhere on your computer.

Convert SafeWallet data

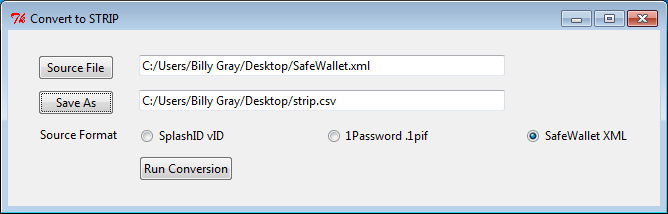

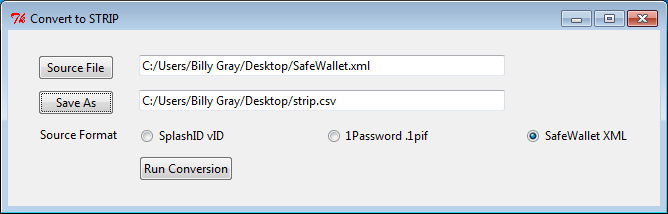

- Download the STRIP Data converter onto your desktop and Unzip it. Double click the icon to run it.

- Click "Source File" button and choose SafeWallet XML export file.

- Click the Save As button and save strip.csv on the desktop.

- Make sure the "SafeWallet XML" radio button is selected for Source Format.

- Click "Run Conversion" to migrate the file to the Strip export format.

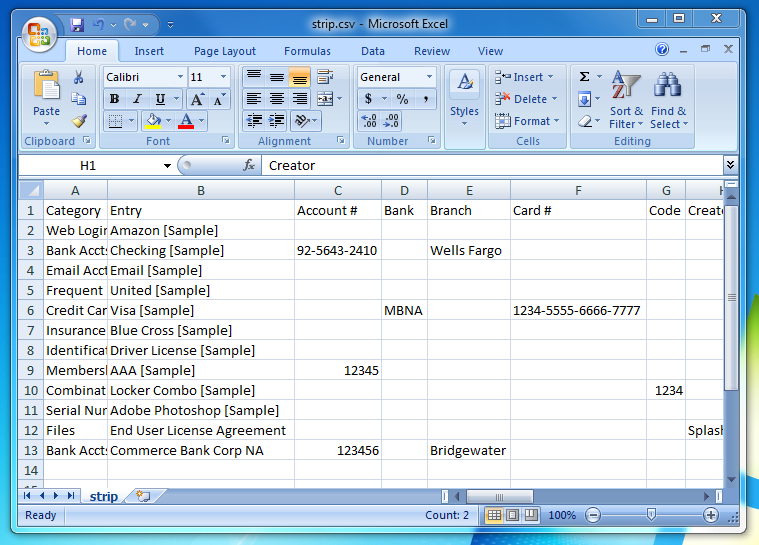

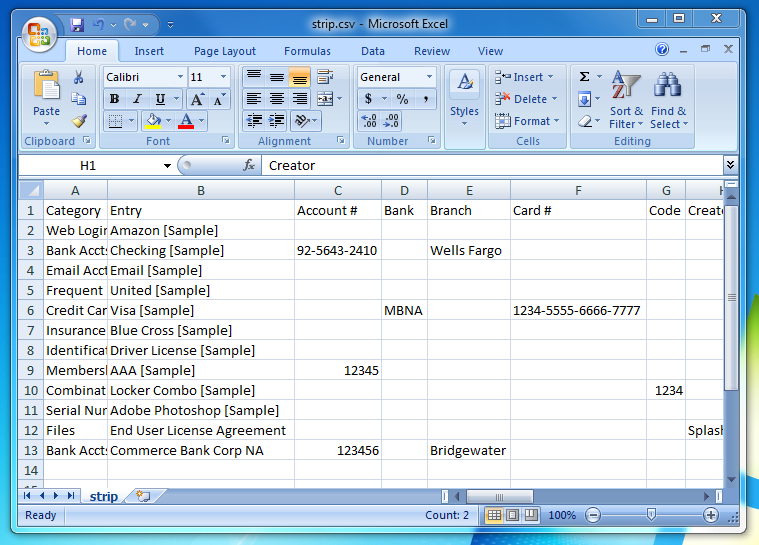

Verify your data

There is now a 'strip.csv' file on your Desktop. You can open it in a spreadsheet editor to check its contents (e.g. OpenOffice.org, Numbers, Excel), or open it in a simple text editor. It's a good idea to check the data over for accuracy before importing it into STRIP.

Note: if you decide to edit your CSV data before import, be sure to save the file as CSV data when done. Additionally, if your data contains international characters (e.g. ü, é, etc) do not attempt to edit the file in Excel, your best bet to preserve these characters correctly is to use the free Calc spreadsheet editor from OpenOffice.org.

Import into STRIP

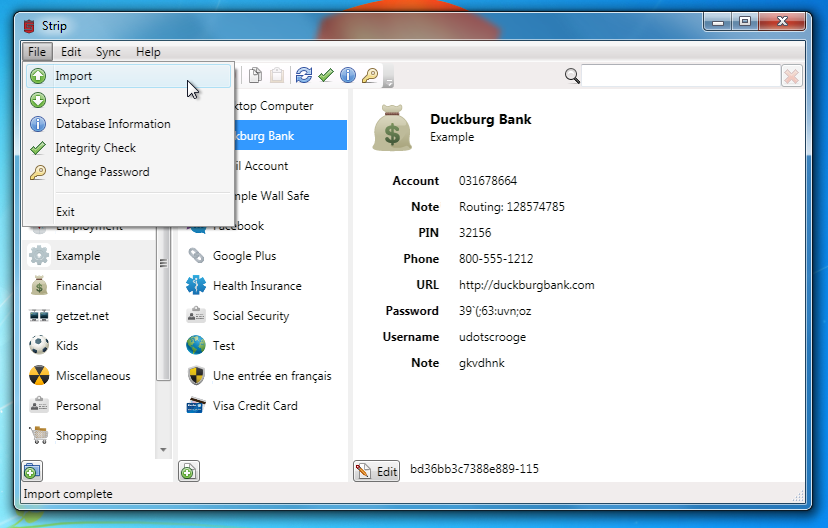

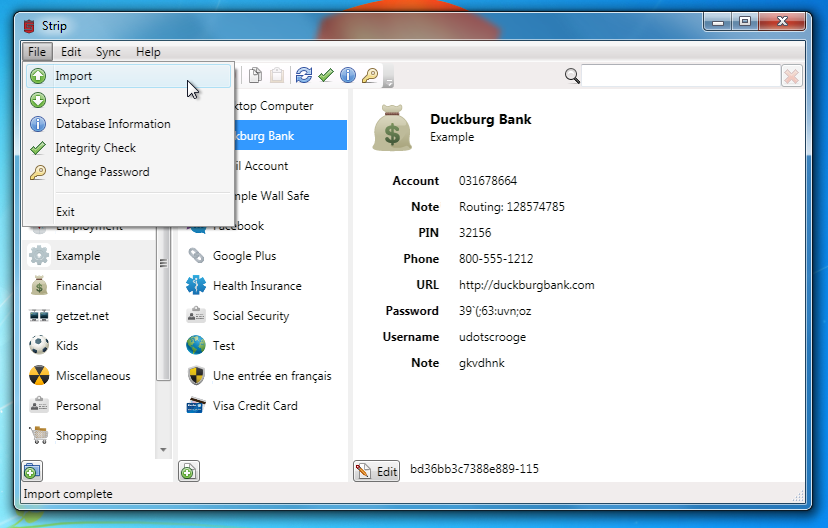

Log into STRIP on your PC or Mac and choose the strip.csv file on your Desktop.

Once the import is complete you'll see all of your SafeWallet data right in STRIP! Once you've checked that everything looks okay in STRIP you should delete the two plaintext import/export files (remember to empty your trash, or even better, securely delete them).

2013-06-27 12:45:57 -0400

We would like to announce the release of SQLCipher 2.2.0. The follow highlights changes included in this release:

- Configurable crypto providers including experimental support for CommonCrypto and LibTomCrypt

- A change to the native CursorWindow to remove usage of private android::MemoryBase

- Renaming of generated binaries from sqlite3 to sqlcipher

- Based on SQLite version 3.7.17

We have renamed both the library and shell from sqlite3 to sqlcipher (e.g. previously libraries which have been named libsqlite3.so are now named libsqlcipher.so, and the command line tool is now called sqlcipher instead of sqlite3). As the use of SQLCipher grows, this change will aid in isolating conflicts from system installed versions of SQLite. We have also narrowed the usage of non-static interfaces within the source. Thanks to Hans-Christoph Steiner from the Guardian Project for contributing the patches he used to prep SQLCipher for Debian.

We are also introducing configurable crypto providers in this release. OpenSSL remains the default provider, but we have included experimental support for both the Apple CommonCrypto library and the open source LibTomCrypt library.

To support this change, we have added an additional configure flag called --with-crypto-lib. Currently, you can specify one of the following values: openssl, commoncrypto, libtomcrypt, or none for amalgamation builds. If this flag is not included at configure, OpenSSL is used by default.

An example of building SQLCipher with CommonCrypto follows:

./configure --enable-load-extension --enable-tempstore=yes \

--with-crypto-lib=commoncrypto \

CFLAGS="-DSQLITE_HAS_CODEC -DSQLITE_ENABLE_FTS3" \

LDFLAGS="/System/Library/Frameworks/Security.framework/Versions/Current/Security"

Applications that are compiling in the SQLCipher amalgamation or using the Xcode project can experiment with Common Crypto support by removing the openssl-xcode sub project and link dependencies, defining SQLCIPHER_CRYPTO_CC (i.e. adding a -DSQLCIPHER_CRYPTO_CC compiler flag), and adding Security.framework to the link libraries for the project.

An example of building SQLCipher with LibTomCrypt follows:

./configure --enable-load-extension --enable-tempstore=yes \

--with-crypto-lib=libtomcrypt \

CFLAGS="-DSQLITE_HAS_CODEC -DSQLITE_ENABLE_FTS3 \

-I/Users/nparker/src/libtomcrypt-1.17/src/headers" \

LDFLAGS="-L/Users/nparker/src/libtomcrypt-1.17/ -ltomcrypt"

Please note that the LibTomCrypt implementation is still in the formative stages, and should not be used for any real production implementations at this time due to limited entropy in RNG seeding.

Following compilation, once a key has been provided to properly initialize a cipher context, you can verify which provider you are using by executing the following read-only PRAGMA:

PRAGMA cipher_provider;

Finally, this release includes a fix to the native CursorWindow that removes the usage of android::MemoryBase. This resolves an issue under an upcoming Android platform release that marks this API as private and causes a crash.

The latest source can be found here [1]. We've also prepared a binary package of SQLCipher for Android here [2]. Please take a look, try out the new library changes and give us your feedback - we welcome it! Thanks!

https://github.com/sqlcipher/sqlcipher

https://s3.amazonaws.com/sqlcipher/SQLCipher+for+Android+2.2.0.zip

2013-06-03 13:01:26 -0400

Monday morning we released STRIP for OS X version 2.0.1 in the Mac App Store, it should be available now. This is a minor but helpful maintenance update with some good bugfixes for version 2.0.0. All customers are encourage to upgrade (rememer to take a backup before upgrading). This release includes the following changes:

- Adds option to show password generator on edit entry context menu

- Shows number of entries below entries list

- Clarifies labels for operations under Sync menu

- Selects previously selected entry after login

- Fixes selection of entry upon tab from search field

- Fixes repeated locking notifications when application is already locked

- Fixes issue with CSV file import creating empty entries

- Fixes numeric-only password generation

Customers who're using the version of STRIP for OS X purchased from the Zetetic store will also see the update shortly. Select the STRIP menu and choose "Check for Updates" if automatic update checking is disabled under Preferences.

2013-05-30 08:32:42 -0400

CompTIA and viaForensics have just launched a new set of in-depth exams on mobile application security. The Mobile App Security+ exams are structured to ensure that candidates have the specific knowledge required to build native iOS or Android applications that secure local data, off-device communications, and back-end services.

Zetetic had an opportunity to review the exam objectives before launch, and they promise to ensure a solid foundation in a wide range of mobile security topics. If you're not yet convinced that mobile applications need an even greater focus on security, the article that accompanies the test program, Why Mobile App Development is a Risky Business, will change your mind by providing an realistic overview of common risks and challenges.

Registration for the Beta exams is free for a limited time (use promo codes "MAPS ADR Mkt beta" or "MAPS iOS Mkt beta") and definitely worth checking out for anyone building mobile applications today.

2013-05-14 13:40:51 -0400

Discussing new tools and good techniques with Zetetic and The Guardian Project

On Thursday June 6th Zetetic and The Guardian Project will be hosting an evening of short talks and conversation about the how and why of building secure mobile applications that keep the user's data encrypted and hidden from prying eyes. We'll have a few short presentations on tools like SQLCipher, IOCipher, and NetCipher and how they can be used in modern applications. We'll answer questions about general strategies and specific toolkits, and our developers will be available to chat afterwards over pizza and beer.

The event will be held at New Work City in New York, a fantastic coworking and event space at the edge of Tribeca and Chinatown, from 7-10pm. If you'd like to join us, please RSVP on eventbrite.com (it's free) so we can have an idea of how many folks to expect.

The evening's agenda will feature two or three short presentations discussing what's involved in building more secure applications, why this should be a critical focus for all developers, and how to easily integrate tools into your own projects, followed by Q&A for each. We'll also hold a slot open for short talks from members of the community who would like to share information on security-related projects they are working on (send us a note if you'd like to present a bit about your project).

After the discussions wrap up, we'll break for snack and chat time, and will be available to discuss the toolkits involved and approaches to application security in general. We can answer questions in a one-on-one capacity, discuss future plans for these projects, and we'd love to get your feedback if you're already using SQLCipher or one of the Guardian toolkits.

This will also be a good opportunity for those interested in the further development of these libraries to meet up in person, we're really looking forward to that. So please join us! Bring your own projects, tell us what you're working on, let's talk some crypto!