2012-04-23 12:17:04 -0400

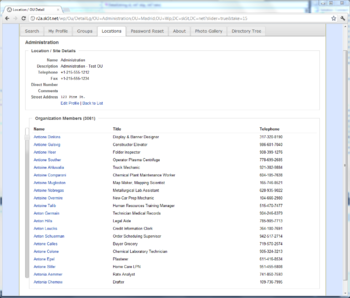

One of the lesser-understood topics in working with Active Directory and LDAP is the Virtual List View, or VLV. In a nutshell, VLV lets you, the LDAP application developer, query a very large directory container in efficient, bite-sized chunks. Consider, for example, a directory with one very large "Users" OU; e.g.:

dc=demo,dc=local

+ ou=DemoGroups (50,000 groups)

+ ou=DemoUsers (50,000 users)

If you'd like to present the contents of this OU in a scrolling or paged window in an application, retrieving 50 pages of 1,000 results each is probably not the most efficient option; this will tax the directory server, waste network traffic, and suck up a lot of memory in your app too (especially if you aren't picky about retrieving just the necessary attributes).

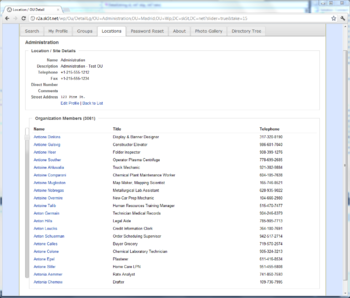

VLV aims to solve this problem by providing a way to seek to positions within a very large LDAP result set, in conjunction with a sorting rule. Want to show just the first, or fiftieth, set of 20 users in an OU, ordered by displayName? Then it's time for VLV! We use Virtual List Views in Connect's web and mobile interfaces to ensure a reliable, responsive user experience when when browsing large directories... on the other side of the coin, Windows' Active Directory Users & Computers administrative tool should use VLV when possible, but doesn't--it'll just complain when an OU has more than 2,000 child objects and gives up.

This is actually a pretty common problem we see in LDAP client applications; often time the developer has built and tested against a small directory, and/or tests with highest privileges to avoid LDAP administrative limits.

Here's a screenshot of Connect using Virtual List Views over AD to provide a very efficient slider control. Even though the OU has several thousand objects, clicking or dragging the slider provides nearly instantaneous results (about 70 milliseconds per click):

When including a VLV Request control in an LDAP query, we need to tell the directory server:

- How to sort the result set (without ordering, the idea of a "page" makes little sense)

- The position of the target entry (i.e., where in the list are we)

- How many entries before the target entry to fetch

- How many entries after the target to fetch

- An estimate of how many objects we think are in the view

Of particular note, the ordering rule is expressed by including a Sort Request Control. And #5, the estimated content count, helps the server seek to approximately the correct place in the result set. For example, if we ask the server for offset 500 in a view we think has 1,000 entries, but the directory data have changed so much that there are now 1,500 entries in the view, the server can adjust its answer to provide results at the position we probably wanted.

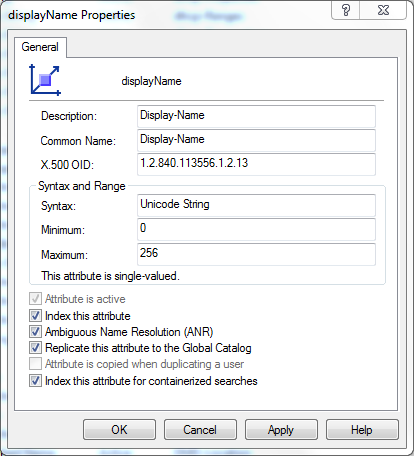

A couple other things to keep in mind when using VLV are that it works best when the sort control and LDAP filter line up; e.g., if we're sorting on the displayName attribute, then "(&(displayName=*))" is often a good search filter. Also, a "one level" LDAP search usually makes the most sense in conjunction with VLV, although a subtree search may sometimes do the right thing. If you issue a complicated or very specific filter along with a VLV control, the results may not quite be what you expect. Of course, you'll want to confirm that the attribute is VLV indexed by checking the attribute's searchFlags settings in the Schema container. Here's a quick way to find all the subtree (VLV) indexed attributes using Joe Richards' excellent adfind -- this'll find both one-level indexed attributes (searchFlags & 2) and subtree indexed (searchFlags & 64):

adfind -schema -flagdc -bit -f searchFlags:OR:=66 searchFlags ldapDisplayName

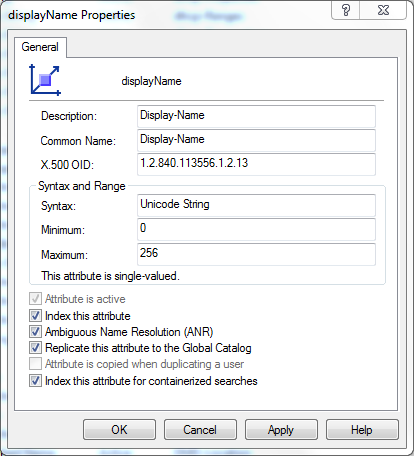

You might know the subtree index flag better from the Active Directory Schema Management console, where it's labeled as "Index this attribute for containerized searches" (is "containerized" a word? But I digress):

The Zetetic.Ldap project, available on Github and Nuget, provides code you can use directly or as a baseline for writing your own VLV requests.

Finally, here's a table of sample results on an Active Directory 2008R2 domain controller with some reasonably large OUs and various VLV options. This will help to give you a general idea of what VLV results to expect when applying specific vs. very general LDAP searches, using attributes that are or aren't indexed for VLV support. I'll just reiterate that this should support the conclusion that VLV works best on searches for an OU's immediate children, sorted and filtered on an attribute that has the subtree index bit set.

2012-03-29 12:55:51 -0400

A question on Twitter [1] [2] prompted us to take a look at the password hashing mechanisms available to the .NET Framework, and specifically to the standard SqlMembershipProvider.

For those who don't work with this aspect of ASP.NET, the .NET framework provides a simple, SQL Server-based store for web application user data, which includes user details like logon ID and email address, logon count, password failures, plus the password salt and password hash.

The membership provider can be configured to use any CLR class that implements System.Security.Cryptography.HashAlgorithm, always with a 16-byte salt, and the out-of-the-box hash algorithms are:

- MD5

- RIPEMD160

- SHA1

- SHA256

- SHA384

- SHA512

- (Keyed) HMAC

- (Keyed) HMACTripleDES

These algorithms are generally good for showing data integrity, but they aren't well-suited for password hashing because it's possible to run them at an extremely high speed--millions or hundreds of millions per second on a modern GPU--which means a low overall cost and effort to crack a list of leaked password hash data, despite salting. See here for Hacker News's favorite article about why these are unacceptable for hashing passwords.

In short, if an attacker were to gain access to the SQL database, it would be feasible to discover many of the passwords within via brute force because all of these hash algorithms are too fast. An attacker could then use these discovered, plaintext passwords to attempt to access other sites, impersonating your users (who, we suspect, have not diligently used an unique, random password at each site... all the more reason they should use STRIP).

Now, there are alternatives, one of which is already built in: The .NET Framework has included an implementation of Password Based Key Derivation Function 2 (PBKDF2) in the Rfc2898DeriveBytes class, going all the way back to .NET Framework 2. However, Rfc2898DeriveBytes does not implement the HashAlgorithm method that would make it compatible with the ASP.NET SqlMembershipProvider or with other general-purpose programmatic .NET hashing interfaces.

The bcrypt algorithm is even more resistant to brute-force attacks (i.e., it's more computationally expensive), and there's already a .NET implementation of bcrypt, but it also does not implement HashAlgorithm.

Importantly, both PBKDF2 and bcrypt are adaptive algorithms: scaling up the effort needed to compute them is built into their design, such that if computers were 10x faster, you could ratchet up their work factors to make them do 10x more computation.

Taking all this into account, we decided to build a simple .NET library that makes PBKDF2 and bcrypt work with SqlMembershipProvider and other areas within the .NET crypto API.

View the code here

Download a binary build here

Using the new hash algorithms

First, install Zetetic.Security.dll into the .NET Global Assembly Cache: you can either:

- Drag the file into C:\Windows\Assembly via Windows Explorer (which may require turning off UAC on Windows 7 / 2008), or

- Launch an elevated command prompt and use gacutil: gacutil /i Zetetic.Security.dll

Next, you'll need to register the new algorithms and aliases for them in the .NET Framework's "machine.config" file. For example, if you want to use the new algorithms with .NET 4 64-bit applications, launch an elevated command prompt and edit the file C:\Windows\Microsoft.NET\Framework64\v4.0.30319\machine.config. (Do this for each .NET Framework version that will need to take advantage of the new hash algorithms.) You'll want to add the following section just before the end of the file (or at least, not before the configSections area, which must always come first):

Almost there -- the only remaining task is to associate the new hash algorithm to your SqlMembershipProvider in the web application's Web.config file:

And, that's all there is to it. Of course, bear in mind that any pre-existing users in the database will need to reset their passwords, as the SqlMembershipProvider doesn't include any per-password details about the hash algorithm used to create it... so, simply applying this new configuration to an existing user database will cause every login attempt to fail, considering that the default algorithm is salted SHA1 or SHA256.

One important note: in order to achieve a balance of server performance and security, the version of Zetetic.Security uses 5,000 computations of PBKDF2, and 2^10 rounds of bcrypt. It is certainly possible to increase these factors, but we'd opt to do so in separate classes, so that no easily-forgotten configurations are needed to maintain consistent hash results.

2012-03-21 11:32:50 -0400

In ElcomSoft's recent presentation demonstrating attacks on popular password managers, the researchers discovered an optimized brute force attack against 1Password due to the use of PKCS7 padding and lack of key strengthening. They suggest that this flaw makes it easy to brute force 1Password, unless a sufficiently complex password is used (e.g. that it would take less than a day to crack a 14 digit numeric or 7 character, fully random and alphanumeric password).

1Password is a popular and flexible application. They have rapidly responded to the concerns, encouraging the use of stronger passwords with the tool, planning to plug gaps by eliminating the use of PKCS7, adding PBKDF2 key derivation, and ensuring that all data is encrypted with the master key. These are excellent steps that should help to strengthen 1Password against brute force attacks.

Despite these planned changes, we've recently seen increased interest from users wanting to migrate data to STRIP because it was recognized at the same conference as the "most resilient to password cracking" and one of the only applications that properly implemented strong cryptography. As a result, we've expanded our previously announced Strip conversion tool to provide simple migration of 1Password exports.

The Convert to STRIP utility is free to use and runs on Windows and Mac OS X. This process assumes that you have already downloaded and installed STRIP Desktop or STRIP Sync and the Conversion tool. Once you have migrated the data on your desktop you can simply sync with STRIP for iOS to get the data onto your mobile device.

Get STRIP Now »

Convert to STRIP for Windows »

Convert to STRIP for OS X »

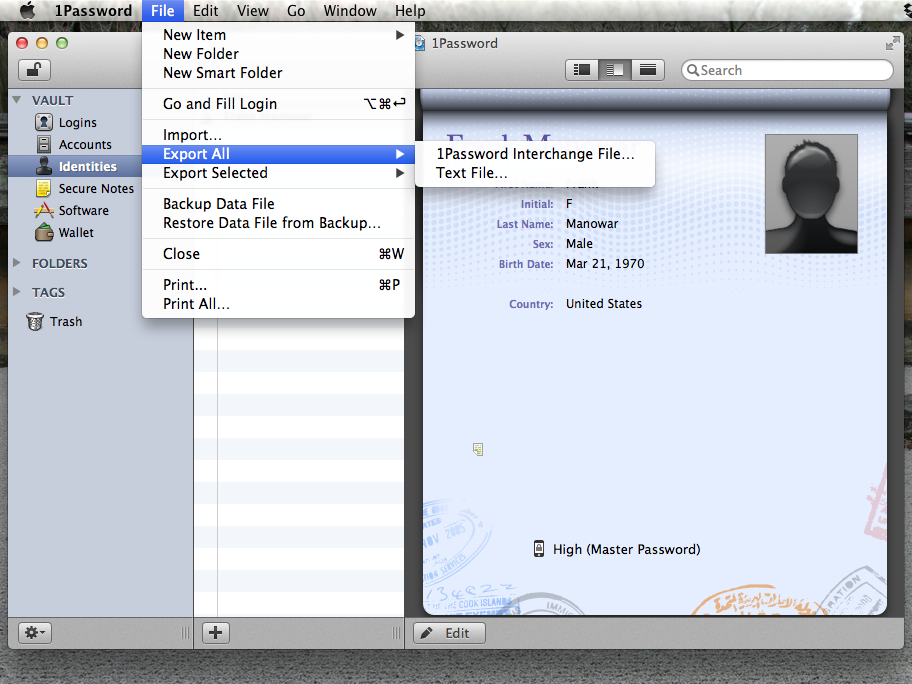

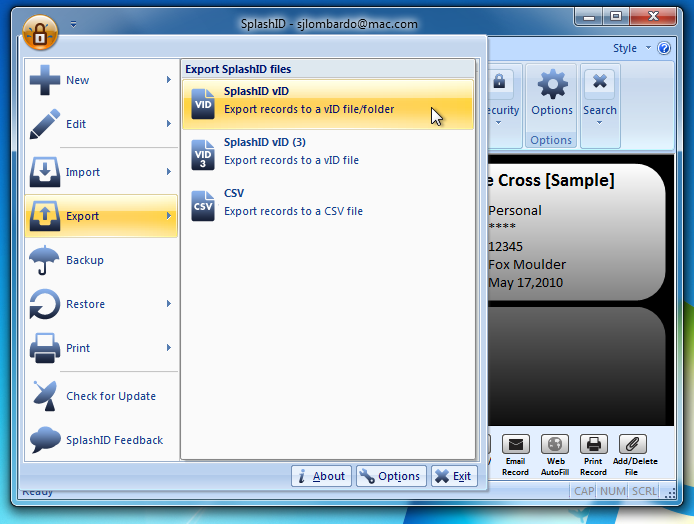

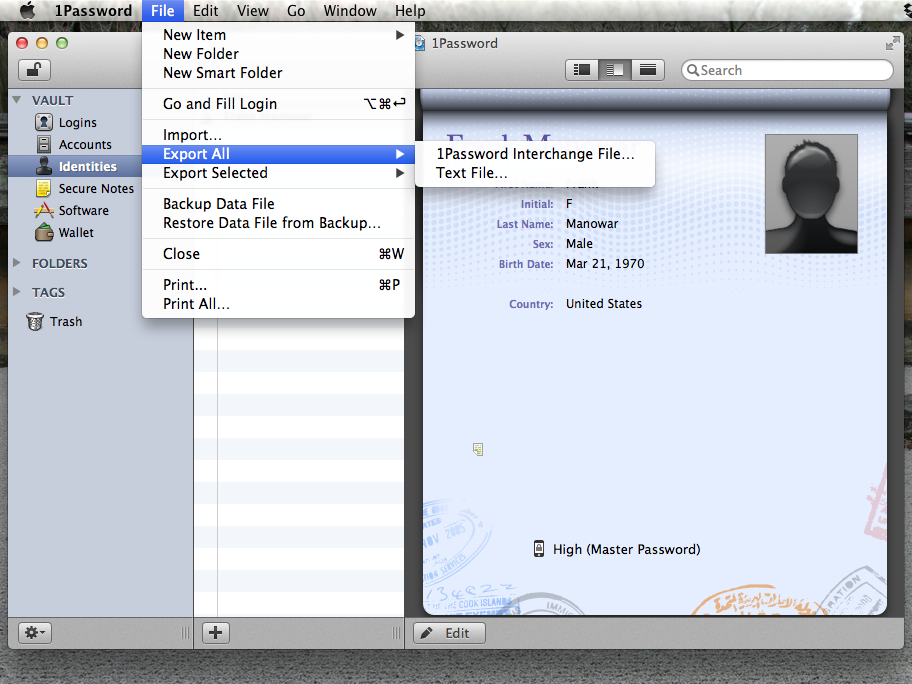

Export the 1Password data

Launch 1Password and login. Once the application is unlocked, go to the application's File menu, select Export All -> 1Password Interchange File.... In the dialog that appears, you can keep the suggested name for the file, click on Where to select your Desktop, and then Export.

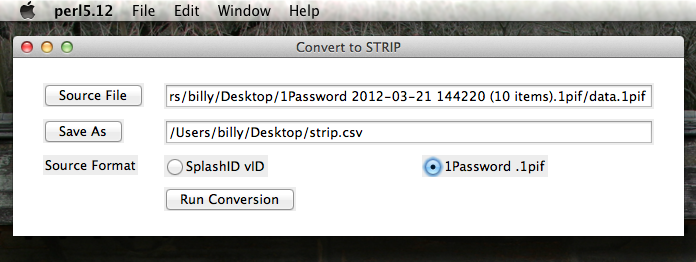

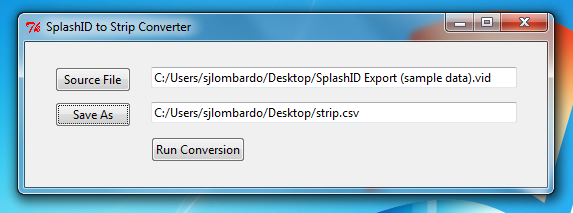

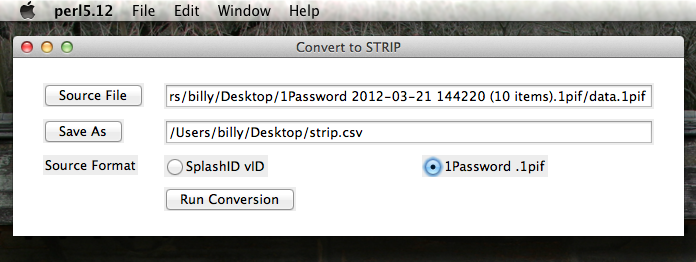

Convert 1Password Export

- Download the STRIP Data converter onto your desktop and Unzip it. Double click the icon to run it.

- Click "Source File" button and choose the 1Password export file.

- Click the Save As button and save strip.csv on the desktop.

- Make sure that "1Password .1pif" radio button is selected for Source Format.

- Click "Run Conversion" to migrate the file to the Strip export format.

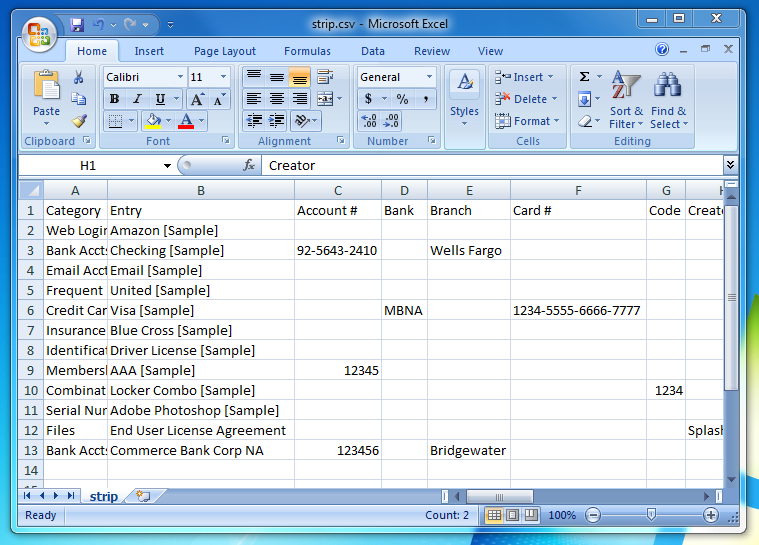

Verify Data (Optional)

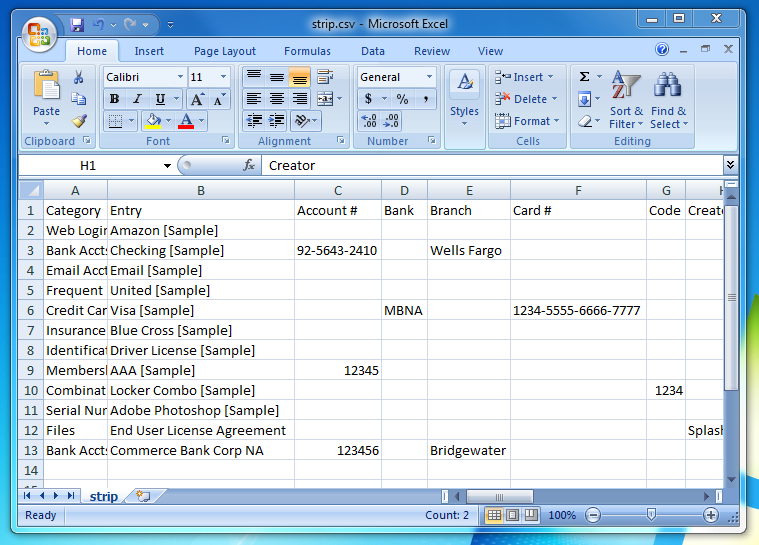

There is now a 'strip.csv' file on your Desktop. You can open it in a spreadsheet editor to check its contents (e.g. OpenOffice.org, Numbers, Excel), or open it in a simple text editor. It's a good idea to check the data over for accuracy before importing it into STRIP.

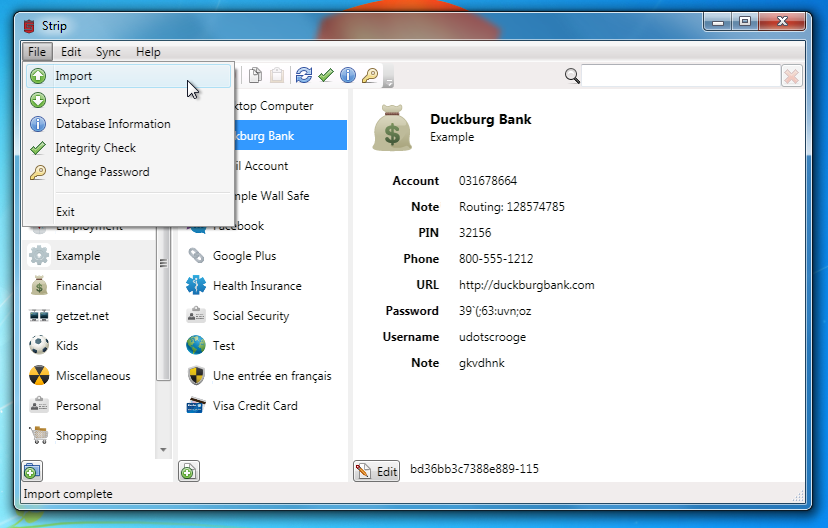

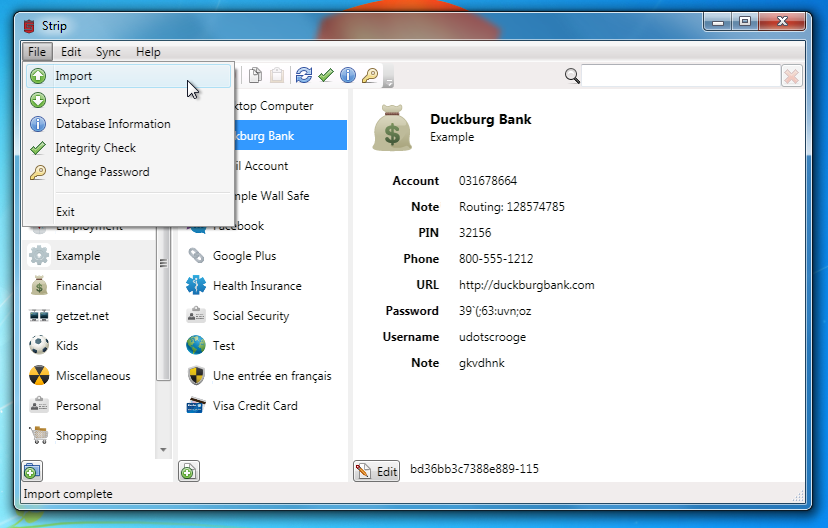

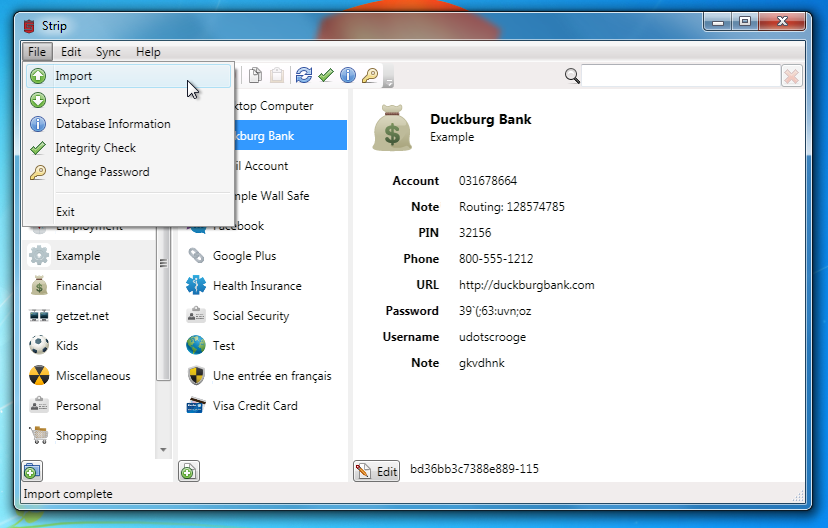

Import into STRIP

Log into STRIP Desktop or STRIP Sync, go to the File menu, and select "Import...", and choose the strip.csv file on your Desktop.

Once the import is complete you'll see all of your 1Password data right in STRIP! Once you've checked that everything looks OK in strip you should delete the two plaintext import/export files (remember to empty your trash, or even better, securely delete them).

2012-03-20 11:57:10 -0400

Hot on the heels on of the Mac OS Splash ID migration script, we've now prepared an easy to use, graphical SplashID converter called Convert To Strip, for Mac OS X and Windows.

In case you missed it, ElcomSoft recently presented a paper demonstrating attacks on several popular Password Managers. One of the most critical flaws was identified in SplashID, where the researchers found that a global key was used to encrypt the master password, rendering it instantly recoverable.

This converter is intended to help users migrate all of ther sensitive data from a SplashID export file into STRIP, the password manager recognized at the same conference as the "most resilient to password cracking" and one of the only applications that properly implemented strong cryptography.

This process assumes that you have already downloaded and installed Strip.

Get STRIP Now »

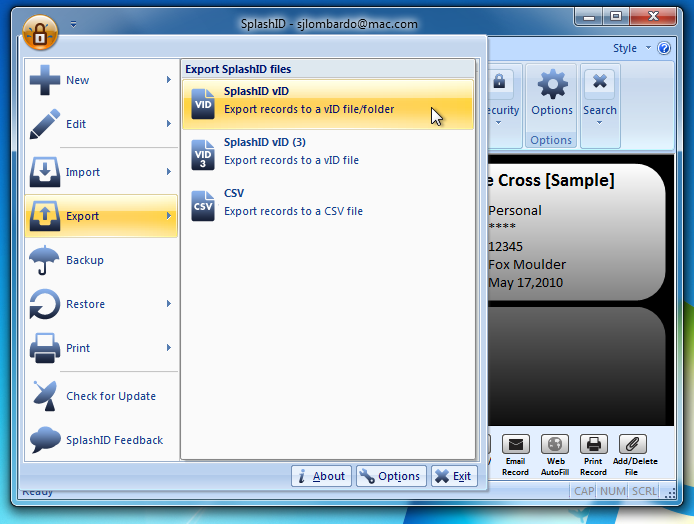

Export the SplashID data

Launch SplashID Safe and login. Once the application is unlocked, go to the application menu, select Export, choose the first SplashID vID format, choose Export All Records, and un-check Export Attachments. You can keep the suggested name for the file, click on Where to select your Desktop, and then Save. When prompted, enter a blank password.

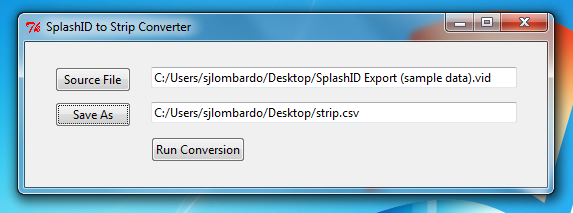

Convert SplashID Export

- Download the STRIP Data converter onto your desktop and Unzip it. Double click the icon to run it.

- Click "Source File" button and choose the Splash ID export file.

- Click the Save As button and save strip.csv on the desktop.

- Click "Run Conversion" to migrate the file to the Strip export format.

Convert to STRIP for Windows »

Convert to STRIP for OS X »

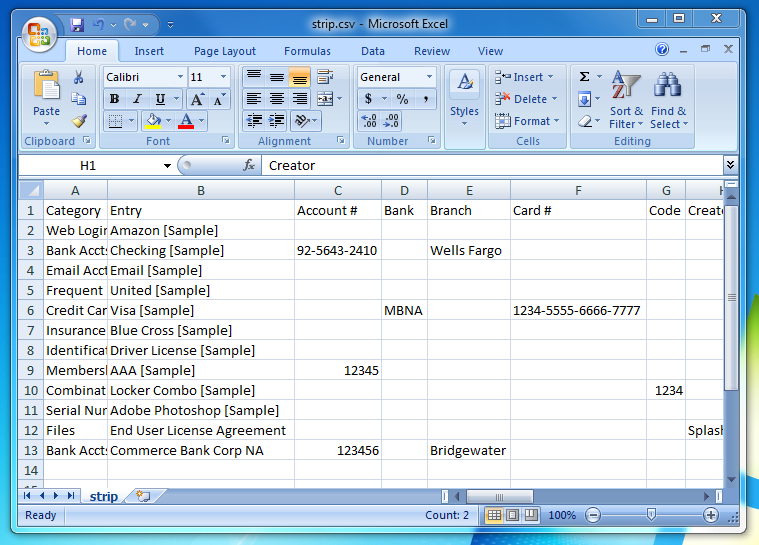

Verify Data (Optional)

There is now a 'strip.csv' file on your Desktop. You can open it in a spreadsheet editor to check its contents (e.g. OpenOffice.org, Numbers, Excel), or open it in a simple text editor. It's a good idea to check the data over for accuracy before importing it into STRIP.

Import into STRIP

Log into STRIP Desktop, go to the File menu, and select "Import...", and choose the strip.csv file on your Desktop.

Once the import is complete you'll see all of your SplashID data right in Strip! Once you've checked that everything looks OK in strip you should delete the two plaintext import/export files (remember to empty your trash, or even better, securely delete them).

2012-03-20 09:26:48 -0400

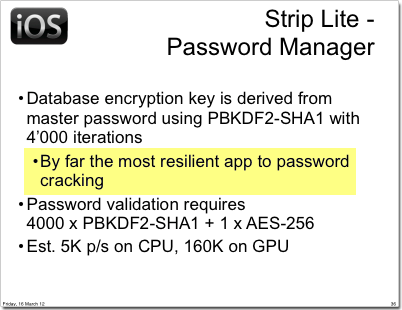

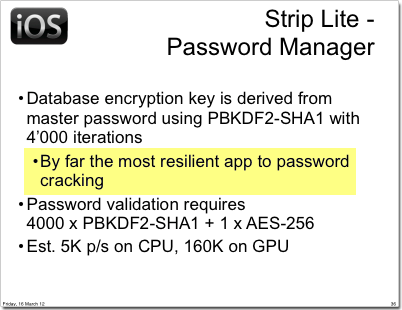

During the third day of the BlackHatEU conference, Andrey Belenko and Dmitry Sklyarov of ElcomSoft presented an analysis of iOS and Blackberry password managers entitled “Secure Password Managers” and “Military-Grade Encryption” on Smartphones: Oh, Really?. It was a complete analysis of 17 of the most popular password management programs showing that many password managers store data in an unencrypted format, "encrypted so poorly that they can be recovered instantly", or are susceptible to basic cracking techniques.

One of the apps reviewed in the paper was Zetetic's Strip Lite, which is backed entirely by SQLCipher. As such, the results of their findings hold true for all apps using SQLCipher. From the reports we've heard it was noted as the one exception that properly implemented strong cryptography, was "by far the most resilient app to password cracking", and the most secure app for iOS.

One of the apps reviewed in the paper was Zetetic's Strip Lite, which is backed entirely by SQLCipher. As such, the results of their findings hold true for all apps using SQLCipher. From the reports we've heard it was noted as the one exception that properly implemented strong cryptography, was "by far the most resilient app to password cracking", and the most secure app for iOS.

However, the paper includes some brute force cracking estimates that serve as an important reminder about the need to use a strong passphrase when encrypting data. Their estimates show clearly that given suitably fast GPUs it would be possible to brute force standard numeric PIN numbers due to their low entropy / small search space.

This topic has been discussed exhaustively on the SQLCipher mailing list in the past, but the security of SQLCipher is highly dependent on the security of the key used. Even though we take great pains to increase the difficulty of brute force attacks using PBKDF2, and avoid dictionary / rainbow table attacks with the per database salt, these techniques can only be successful if a suitably strong key is chosen.

The original whitepaper contains all of the source information, and you can also read more about our initial thoughs on the analysis.